支持向量機可以用來擬合線性回歸。

相同的最大間隔(maximum margin)的概念應(yīng)用到線性回歸擬合。代替最大化分割兩類目標(biāo)是,最大化分割包含大部分的數(shù)據(jù)點(x,y)。我們將用相同的iris數(shù)據(jù)集,展示用剛才的概念來進行花萼長度與花瓣寬度之間的線性擬合。

相關(guān)的損失函數(shù)類似于max(0,|yi-(Axi+b)|-ε)。ε這里,是間隔寬度的一半,這意味著如果一個數(shù)據(jù)點在該區(qū)域,則損失等于0。

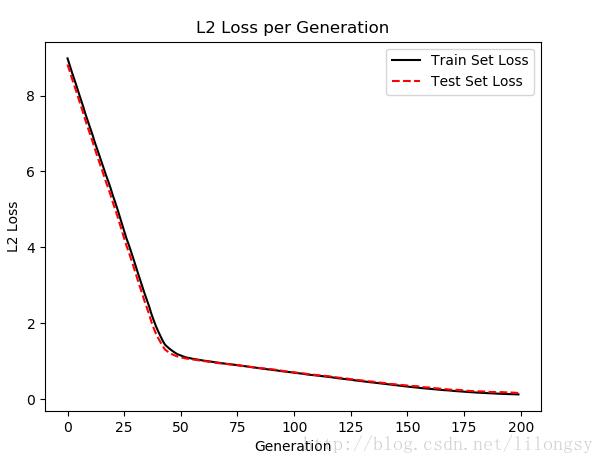

# SVM Regression#----------------------------------## This function shows how to use TensorFlow to# solve support vector regression. We are going# to find the line that has the maximum margin# which INCLUDES as many points as possible## We will use the iris data, specifically:# y = Sepal Length# x = Pedal Widthimport matplotlib.pyplot as pltimport numpy as npimport tensorflow as tffrom sklearn import datasetsfrom tensorflow.python.framework import opsops.reset_default_graph()# Create graphsess = tf.Session()# Load the data# iris.data = [(Sepal Length, Sepal Width, Petal Length, Petal Width)]iris = datasets.load_iris()x_vals = np.array([x[3] for x in iris.data])y_vals = np.array([y[0] for y in iris.data])# Split data into train/test setstrain_indices = np.random.choice(len(x_vals), round(len(x_vals)*0.8), replace=False)test_indices = np.array(list(set(range(len(x_vals))) - set(train_indices)))x_vals_train = x_vals[train_indices]x_vals_test = x_vals[test_indices]y_vals_train = y_vals[train_indices]y_vals_test = y_vals[test_indices]# Declare batch sizebatch_size = 50# Initialize placeholdersx_data = tf.placeholder(shape=[None, 1], dtype=tf.float32)y_target = tf.placeholder(shape=[None, 1], dtype=tf.float32)# Create variables for linear regressionA = tf.Variable(tf.random_normal(shape=[1,1]))b = tf.Variable(tf.random_normal(shape=[1,1]))# Declare model operationsmodel_output = tf.add(tf.matmul(x_data, A), b)# Declare loss function# = max(0, abs(target - predicted) + epsilon)# 1/2 margin width parameter = epsilonepsilon = tf.constant([0.5])# Margin term in lossloss = tf.reduce_mean(tf.maximum(0., tf.subtract(tf.abs(tf.subtract(model_output, y_target)), epsilon)))# Declare optimizermy_opt = tf.train.GradientDescentOptimizer(0.075)train_step = my_opt.minimize(loss)# Initialize variablesinit = tf.global_variables_initializer()sess.run(init)# Training looptrain_loss = []test_loss = []for i in range(200): rand_index = np.random.choice(len(x_vals_train), size=batch_size) rand_x = np.transpose([x_vals_train[rand_index]]) rand_y = np.transpose([y_vals_train[rand_index]]) sess.run(train_step, feed_dict={x_data: rand_x, y_target: rand_y}) temp_train_loss = sess.run(loss, feed_dict={x_data: np.transpose([x_vals_train]), y_target: np.transpose([y_vals_train])}) train_loss.append(temp_train_loss) temp_test_loss = sess.run(loss, feed_dict={x_data: np.transpose([x_vals_test]), y_target: np.transpose([y_vals_test])}) test_loss.append(temp_test_loss) if (i+1)%50==0: print('-----------') print('Generation: ' + str(i+1)) print('A = ' + str(sess.run(A)) + ' b = ' + str(sess.run(b))) print('Train Loss = ' + str(temp_train_loss)) print('Test Loss = ' + str(temp_test_loss))# Extract Coefficients[[slope]] = sess.run(A)[[y_intercept]] = sess.run(b)[width] = sess.run(epsilon)# Get best fit linebest_fit = []best_fit_upper = []best_fit_lower = []for i in x_vals: best_fit.append(slope*i+y_intercept) best_fit_upper.append(slope*i+y_intercept+width) best_fit_lower.append(slope*i+y_intercept-width)# Plot fit with dataplt.plot(x_vals, y_vals, 'o', label='Data Points')plt.plot(x_vals, best_fit, 'r-', label='SVM Regression Line', linewidth=3)plt.plot(x_vals, best_fit_upper, 'r--', linewidth=2)plt.plot(x_vals, best_fit_lower, 'r--', linewidth=2)plt.ylim([0, 10])plt.legend(loc='lower right')plt.title('Sepal Length vs Pedal Width')plt.xlabel('Pedal Width')plt.ylabel('Sepal Length')plt.show()# Plot loss over timeplt.plot(train_loss, 'k-', label='Train Set Loss')plt.plot(test_loss, 'r--', label='Test Set Loss')plt.title('L2 Loss per Generation')plt.xlabel('Generation')plt.ylabel('L2 Loss')plt.legend(loc='upper right')plt.show()輸出結(jié)果:

-----------Generation: 50A = [[ 2.91328382]] b = [[ 1.18453276]]Train Loss = 1.17104Test Loss = 1.1143-----------Generation: 100A = [[ 2.42788291]] b = [[ 2.3755331]]Train Loss = 0.703519Test Loss = 0.715295-----------Generation: 150A = [[ 1.84078252]] b = [[ 3.40453291]]Train Loss = 0.338596Test Loss = 0.365562-----------Generation: 200A = [[ 1.35343242]] b = [[ 4.14853334]]Train Loss = 0.125198Test Loss = 0.16121

基于iris數(shù)據(jù)集(花萼長度和花瓣寬度)的支持向量機回歸,間隔寬度為0.5

每次迭代的支持向量機回歸的損失值(訓(xùn)練集和測試集)

直觀地講,我們認(rèn)為SVM回歸算法試圖把更多的數(shù)據(jù)點擬合到直線兩邊2ε寬度的間隔內(nèi)。這時擬合的直線對于ε參數(shù)更有意義。如果選擇太小的ε值,SVM回歸算法在間隔寬度內(nèi)不能擬合更多的數(shù)據(jù)點;如果選擇太大的ε值,將有許多條直線能夠在間隔寬度內(nèi)擬合所有的數(shù)據(jù)點。作者更傾向于選取更小的ε值,因為在間隔寬度附近的數(shù)據(jù)點比遠處的數(shù)據(jù)點貢獻更少的損失。

以上就是本文的全部內(nèi)容,希望對大家的學(xué)習(xí)有所幫助,也希望大家多多支持VEVB武林網(wǎng)。

新聞熱點

疑難解答

圖片精選